Orange: Metric Evaluation Model

Setiap machine learning model sedang mencoba untuk memecahkan masalah dengan tujuan yang berbeda menggunakan dataset yang berbeda dan karenanya, penting untuk memahami konteksnya sebelum memilih metrik. Biasanya, jawaban atas pertanyaan berikut membantu kita memilih metrik yang sesuai:

- Jenis tugas: Regressi? Klassifikasi?

- Business goal?

- Seperti apa distribusi variabel target?

Metrik Regressi

Mean Squared Error (MSE) Root Mean Squared Error (RMSE) Mean Absolute Error (MAE) R Squared (R²) Adjusted R Squared (R²) Mean Square Percentage Error (MSPE) Mean Absolute Percentage Error (MAPE) Root Mean Squared Logarithmic Error (RMSLE)

Mean Squared Error (MSE)

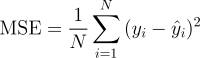

Ini mungkin merupakan metrik paling sederhana dan umum untuk evaluasi regresi, tetapi juga mungkin yang paling tidak berguna. Ini didefinisikan oleh persamaan

dimana yᵢ adalah output aktual yang diharapkan dan ŷᵢ adalah prediksi model.

MSE pada dasarnya mengukur kesalahan kuadrat rata-rata dari prediksi kita. Untuk setiap poin, ia menghitung selisih kuadrat antara prediksi dan target kemudian merata-rata nilai-nilai itu.

Semakin tinggi nilai ini, semakin buruk modelnya. Nilai MSE tidak pernah negatif, karena kita menguadratkan kesalahan prediksi individu sebelum menjumlahkannya, tetapi akan menjadi nol untuk model yang sempurna.

Keuntungan: Berguna jika kita memiliki nilai tak terduga yang harus kita pedulikan. Nilai sangat tinggi atau rendah yang harus kita perhatikan.

Kerugian: Jika kita membuat satu prediksi yang sangat buruk, kuadrat akan membuat kesalahan lebih buruk dan itu mungkin membuat metrik cenderung melebih-lebihkan keburukan model. Itu adalah perilaku yang sangat bermasalah jika kita memiliki data yang noisy (yaitu, data yang karena alasan apa pun tidak sepenuhnya dapat diandalkan) - bahkan model "sempurna" mungkin memiliki MSE tinggi dalam situasi itu, sehingga menjadi sulit untuk menilai seberapa baik model sedang melakukan. Di sisi lain, jika semua kesalahan kecil, atau lebih tepatnya, lebih kecil dari 1, dari efek sebaliknya dirasakan: kita dapat meremehkan keburukan model.

Sebagai catatan bahwa jika kita ingin memiliki prediksi konstan, yang terbaik adalah nilai rata-rata dari nilai target. Ini dapat ditemukan dengan menetapkan turunan dari kesalahan total kita sehubungan dengan konstanta ke nol, dan menemukannya dari persamaan ini.

Root Mean Squared Error (RMSE)

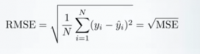

RMSE hanya akar kuadrat dari MSE. Root kuadrat diperkenalkan untuk membuat skala kesalahan menjadi sama dengan skala target.

Sekarang, sangat penting untuk memahami dalam hal apa RMSE mirip dengan MSE, jadi apa bedanya.

Pertama, RMSE mirip dalam hal yang mereka minimum-kan, setiap minimizer MSE juga merupakan minimizer untuk RMSE dan sebaliknya karena akar kuadrat adalah fungsi yang tidak menurun. Misalnya, jika kita memiliki dua set prediksi, A dan B, dan katakanlah MSE dari A lebih besar daripada MSE dari B, maka kita dapat yakin bahwa RMSE dari A adalah RMSE yang lebih besar dari B. Dan hal itu juga berlaku untuk arah yang berlawanan.

Apa arti-nya bagi kita?

Ini berarti bahwa, jika metrik target adalah RMSE, kita masih dapat membandingkan model kita yang menggunakan MSE, karena MSE akan memberi perintah model dengan cara yang sama seperti RMSE. Dengan demikian kita dapat mengoptimalkan MSE daripada RMSE.

Faktanya, MSE sedikit lebih mudah untuk dikerjakan, jadi semua orang menggunakan MSE daripada RMSE. Juga sedikit perbedaan antara keduanya untuk model berbasis gradien.

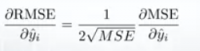

Gradien RMSE sehubungan dengan prediksi ke-i

Ini berarti bahwa perjalanan sepanjang MSE gradient sama dengan perjalanan di sepanjang RMSE gradient tetapi dengan laju aliran yang berbeda dan laju aliran tergantung pada skor MSE itu sendiri.

Jadi meskipun RMSE dan MSE benar-benar mirip dalam hal penilaian model, mereka tidak dapat segera dipertukarkan untuk metode berbasis gradien. Kita mungkin perlu menyesuaikan beberapa parameter seperti learning rate.

Mean Absolute Error (MAE)

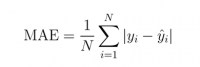

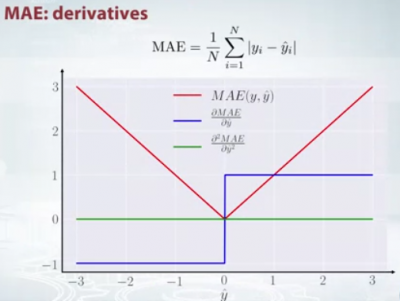

Dalam MAE kesalahan dihitung sebagai rata-rata perbedaan absolut antara nilai target dan prediksi. MAE adalah skor linier yang berarti bahwa semua perbedaan individu diberi bobot yang sama rata-rata. Misalnya, perbedaan antara 10 dan 0 akan menjadi dua kali perbedaan antara 5 dan 0. Namun, hal yang sama tidak berlaku untuk RMSE. Secara matematis, dihitung menggunakan rumus ini:

Yang penting tentang metrik ini adalah metrik ini menghukum kesalahan besar yang tidak separah MSE. Dengan demikian, itu tidak sensitif terhadap outlier sebagai kesalahan kuadrat rata-rata.

MAE banyak digunakan dalam keuangan, di mana kesalahan $10 biasanya dua kali lebih buruk daripada kesalahan $5. Di sisi lain, metrik MSE berpikir bahwa kesalahan $10 adalah empat kali lebih buruk daripada kesalahan $5. MAE lebih mudah di justifikasi daripada RMSE.

Hal penting lainnya tentang MAE adalah gradiennya sehubungan dengan prediksi. Gradien adalah fungsi langkah dan dibutuhkan -1 saat Y_hat lebih kecil dari target dan +1 saat lebih besar.

Sekarang, gradien tidak didefinisikan ketika prediksi sempurna, karena ketika Y_hat sama dengan Y, kita tidak dapat mengevaluasi gradien. Itu tidak didefinisikan.

Jadi secara formal, MAE tidak dapat dibedakan, tetapi pada kenyataannya, seberapa sering prediksi mereka mengukur target dengan sempurna. Bahkan jika mereka melakukannya, kita dapat menulis kondisi IF sederhana dan returnzero ketika itu terjadi dan melalui gradien sebaliknya. Ketahuilah juga bahwa turunan kedua adalah nol di mana-mana dan tidak didefinisikan di titik nol.

Perhatikan bahwa jika kita ingin memiliki prediksi konstan, yang terbaik adalah nilai median dari nilai target. Ini dapat ditemukan dengan menetapkan turunan dari kesalahan total kita sehubungan dengan konstanta ke nol, dan menemukannya dari persamaan ini.

R Squared (R²)

Sekarang, bagaimana jika saya memberi tahu anda bahwa MSE untuk prediksi model saya adalah 32? Haruskah saya meningkatkan model saya atau cukup baik? Atau bagaimana jika MSE saya adalah 0,4? Sebenarnya, sulit untuk menyadari apakah model kita baik atau tidak dengan melihat nilai absolut MSE atau RMSE. Kita mungkin ingin mengukur seberapa banyak model kami lebih baik daripada baseline konstan.

Coefficient of determination, atau R² (atau di kenal sebagai R-two), adalah metric lainyang bisa kita gunakan untyk mengevaluasi sebuah model dan ini berhubungan cukup dekat dengan MSE, tetapi memiliki keuntungan karena bebas skala - tidak masalah jika nilai output sangat besar atau sangat kecil, R² akan selalu berada di antara -∞ and 1.

Ketika R² negatif, artinya model lebih buruk daripada memprediksi rata-rata.

MSE dari model dihitung seperti di atas, sedangkan MSE dari baseline didefinisikan sebagai:

di mana y dengan bar adalah rata-rata yᵢ yang diamati.

Untuk membuatnya lebih jelas, MSE dasar ini dapat dianggap sebagai MSE yang model paling sederhana mungkin dapatkan. Model yang paling sederhana adalah dengan selalu memprediksi rata-rata semua sampel. Nilai mendekati 1 menunjukkan model dengan kesalahan hampir nol, dan nilai mendekati nol menunjukkan model yang sangat dekat dengan baseline.

Kesimpulannya, R² adalah rasio antara seberapa baik model kami vs seberapa baik adalah model rata-rata naif.

Kesalahpahaman umum: Banyak artikel di web menyatakan bahwa kisaran R² terletak antara 0 dan 1 yang sebenarnya tidak benar. Nilai maksimum R² adalah 1 tetapi minimum bisa minus tak terhingga.

Sebagai contoh, pertimbangkan model yang benar-benar jelek yang memprediksi nilai sangat negatif untuk semua pengamatan meskipun y_actual positif. Dalam hal ini, R² akan kurang dari 0. Ini adalah skenario yang sangat tidak mungkin tetapi kemungkinan masih ada.

MAE vs MSE

Biasanya MAE lebih robust (kurang sensitif terhadap outlier) daripada MSE tetapi ini tidak berarti selalu lebih baik menggunakan MAE. Pertanyaan-pertanyaan berikut membantu kita untuk memutuskan:

Kesimpulan

Dalam artikel ini, kita membahas beberapa metrik regresi penting. Kita pertama kali membahas, Mean Square Error dan menyadari bahwa konstanta terbaik untuk itu adalah nilai target rata-rata. Root Mean Square Error, dan R² sangat mirip dengan MSE dari perspektif optimasi. Kami kemudian mendiskusikan Mean Absolute Error dan kapan orang lebih suka menggunakan MAE daripada MSE.

Referensi